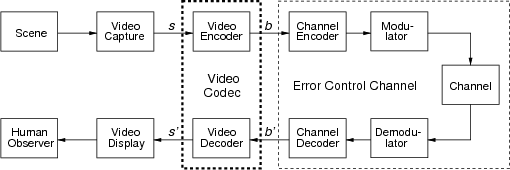

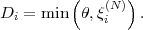

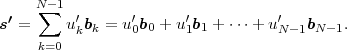

Fig. 1.1 Typical structure of a video transmission system.

This is the html version of the book:

Thomas Wiegand and Heiko Schwarz (2010): "Source Coding: Part I of Fundamentals of Source and Video Coding", Now Publishers, Foundations and Trends® in Signal Processing: Vol. 4: No 1-2, pp 1-222. (pdf version of the book)

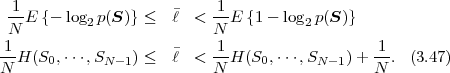

1 Introduction

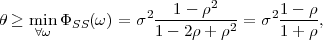

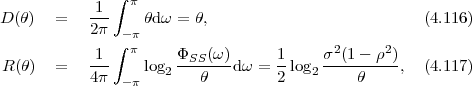

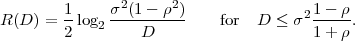

The advances in source coding technology along with the rapid developments and improvements of network infrastructures, storage capacity, and computing power are enabling an increasing number of multimedia applications. In this text, we will describe and analyze fundamental source coding techniques that are found in a variety of multimedia applications, with the emphasis on algorithms that are used in video coding applications. The present first part of the text concentrates on the description of fundamental source coding techniques, while the second part describes their application in modern video coding.

The application areas of digital video today range from multimedia messaging, video telephony, and video conferencing over mobile TV, wireless and wired Internet video streaming, standard- and high-definition TV broadcasting, subscription and pay-per-view services to personal video recorders, digital camcorders, and optical storage media such as the digital versatile disc (DVD) and Blu-Ray disc. Digital video transmission over satellite, cable, and terrestrial channels is typically based on H.222.0/MPEG-2 systems [37], while wired and wireless real-time conversational services often use H.32x [32, 33, 34] or SIP [64], and mobile transmission systems using the Internet and mobile networks are usually based on RTP/IP [68]. In all these application areas, the same basic principles of video compression are employed.

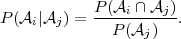

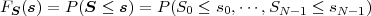

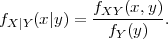

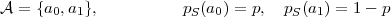

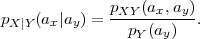

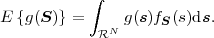

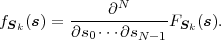

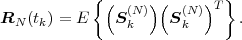

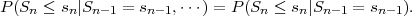

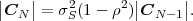

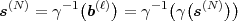

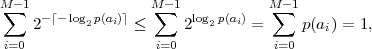

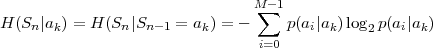

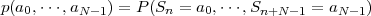

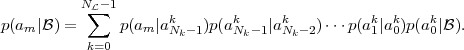

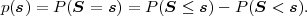

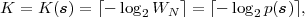

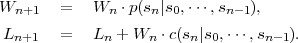

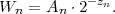

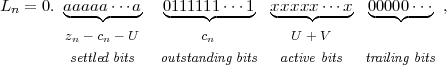

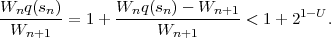

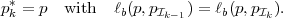

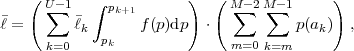

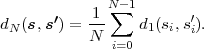

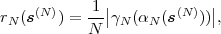

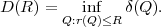

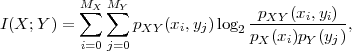

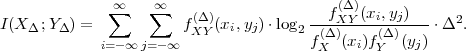

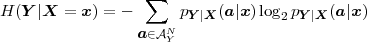

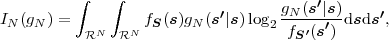

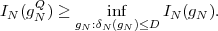

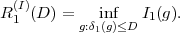

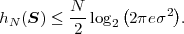

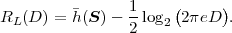

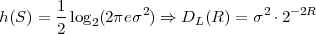

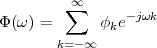

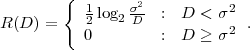

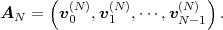

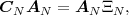

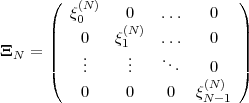

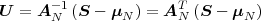

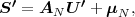

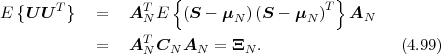

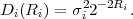

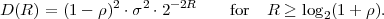

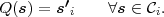

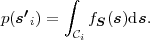

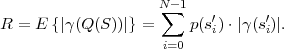

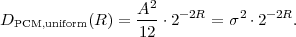

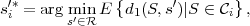

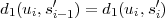

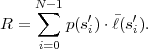

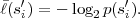

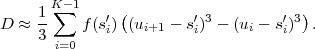

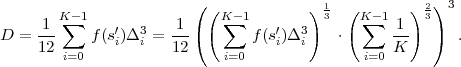

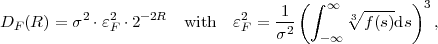

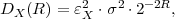

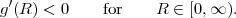

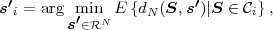

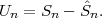

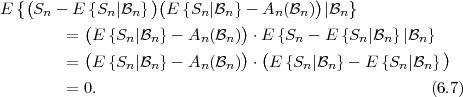

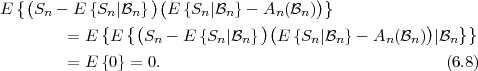

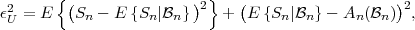

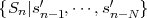

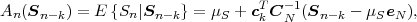

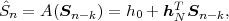

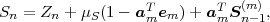

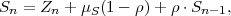

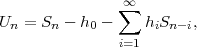

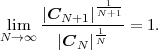

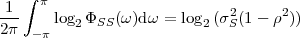

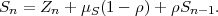

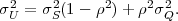

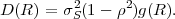

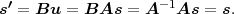

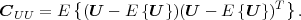

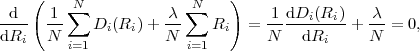

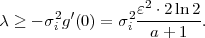

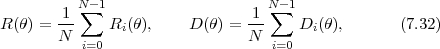

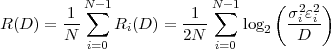

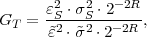

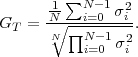

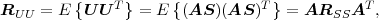

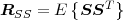

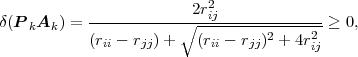

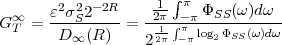

The block structure for a typical video transmission scenario is illustrated in Fig. 1.1. The video capture generates a video signal s that is discrete in space and time. Usually, the video capture consists of a camera that projects the 3-dimensional scene onto an image sensor. Cameras typically generate 25 to 60 frames per second. For the considerations in this text, we assume that the video signal s consists of progressively-scanned pictures. The video encoder maps the video signal s into the bitstream b. The bitstream is transmitted over the error control channel and the received bitstream b′ is processed by the video decoder that reconstructs the decoded video signal s′ and presents it via the video display to the human observer. The visual quality of the decoded video signal s′ as shown on the video display affects the viewing experience of the human observer. This text focuses on the video encoder and decoder part, which is together called a video codec.

The error characteristic of the digital channel can be controlled by the channel encoder, which adds redundancy to the bits at the video encoder output b. The modulator maps the channel encoder output to an analog signal, which is suitable for transmission over a physical channel. The demodulator interprets the received analog signal as a digital signal, which is fed into the channel decoder. The channel decoder processes the digital signal and produces the received bitstream b′, which may be identical to b even in the presence of channel noise. The sequence of the five components, channel encoder, modulator, channel, demodulator, and channel decoder, are lumped into one box, which is called the error control channel. According to Shannon’s basic work [69, 70] that also laid the ground to the subject of this text, by introducing redundancy at the channel encoder and by introducing delay, the amount of transmission errors can be controlled.

The basic communication problem may be posed as conveying source data with the highest fidelity possible without exceeding an available bit rate, or it may be posed as conveying the source data using the lowest bit rate possible while maintaining a specified reproduction fidelity [69]. In either case, a fundamental trade-off is made between bit rate and signal fidelity. The ability of a source coding system to suitable choose this trade-off is referred to as its coding efficiency or rate distortion performance. Video codecs are thus primarily characterized in terms of:

However, in practical video transmission systems, the following additional issues must be considered:

The practical source coding design problem can be stated as follows: Given a maximum allowed delay and a maximum allowed complexity, achieve an optimal trade-off between bit rate and distortion for the range of network environments envisioned in the scope of the applications.

1.2 Scope and Overview of the Text

This text provides a description of the fundamentals of source and video coding. It is aimed at aiding students and engineers to investigate the subject. When we felt that a result is of fundamental importance to the video codec design problem, we chose to deal with it in greater depth. However, we make no attempt to exhaustive coverage of the subject, since it is too broad and too deep to fit the compact presentation format that is chosen here (and our time limit to write this text). We will also not be able to cover all the possible applications of video coding. Instead our focus is on the source coding fundamentals of video coding. This means that we will leave out a number of areas including implementation aspects of video coding and the whole subject of video transmission and error-robust coding.

The text is divided into two parts. In the first part, the fundamentals of source coding are introduced, while the second part explains their application to modern video coding.

In the present first part, we describe basic source coding techniques that are also found in video codecs. In order to keep the presentation simple, we focus on the description for 1-d discrete-time signals. The extension of source coding techniques to 2-d signals, such as video pictures, will be highlighted in the second part of the text in the context of video coding. Chapter 2 gives a brief overview of the concepts of probability, random variables, and random processes, which build the basis for the descriptions in the following chapters. In Chapter 3, we explain the fundamentals of lossless source coding and present lossless techniques that are found in the video coding area in some detail. The following chapters deal with the topic of lossy compression. Chapter 4 summarizes important results of rate distortion theory, which builds the mathematical basis for analyzing the performance of lossy coding techniques. Chapter 5 treats the important subject of quantization, which can be considered as the basic tool for choosing a trade-off between transmission bit rate and signal fidelity. Due to its importance in video coding, we will mainly concentrate on the description of scalar quantization. But we also briefly introduce vector quantization in order to show the structural limitations of scalar quantization and motivate the later discussed techniques of predictive coding and transform coding. Chapter 6 covers the subject of prediction and predictive coding. These concepts are found in several components of video codecs. Well-known examples are the motion-compensated prediction using previously coded pictures, the intra prediction using already coded samples inside a picture, and the prediction of motion parameters. In Chapter 7, we explain the technique of transform coding, which is used in most video codecs for efficiently representing prediction error signals.

The second part of the text will describe the application of the fundamental source coding techniques to video coding. We will discuss the basic structure and the basic concepts that are used in video coding and highlight their application in modern video coding standards. Additionally, we will consider advanced encoder optimization techniques that are relevant for achieving a high coding efficiency. The effectiveness of various design aspects will be demonstrated based on experimental results.

1.3 The Source Coding Principle

The present first part of the text describes the fundamental concepts of source coding. We explain various known source coding principles and demonstrate their efficiency based on 1-d model sources. For additional information on information theoretical aspects of source coding the reader is referred to the excellent monographs in [11, 22, 4]. For the overall subject of source coding including algorithmic design questions, we recommend the two fundamental texts by Gersho and Gray [16] and Jayant and Noll [44].

The primary task of a source codec is to represent a signal with the minimum number of (binary) symbols without exceeding an “acceptable level of distortion”, which is determined by the application. Two types of source coding techniques are typically named:

Chapter 2 briefly reviews the concepts of probability, random variables, and random processes. Lossless source coding will be described in Chapter 3. The Chapters 5, 6, and 7 give an introduction to the lossy coding techniques that are found in modern video coding applications. In Chapter 4, we provide some important results of rate distortion theory, which will be used for discussing the efficiency of the presented lossy coding techniques.

This is the html version of the book:

Thomas Wiegand and Heiko Schwarz (2010): "Source Coding: Part I of Fundamentals of Source and Video Coding", Now Publishers, Foundations and Trends® in Signal Processing: Vol. 4: No 1-2, pp 1-222.

2 Random Processes

The primary goal of video communication, and signal transmission in general, is the transmission of new information to a receiver. Since the receiver does not know the transmitted signal in advance, the source of information can be modeled as a random process. This permits the description of source coding and communication systems using the mathematical framework of the theory of probability and random processes. If reasonable assumptions are made with respect to the source of information, the performance of source coding algorithms can be characterized based on probabilistic averages. The modeling of information sources as random processes builds the basis for the mathematical theory of source coding and communication.

In this chapter, we give a brief overview of the concepts of probability, random variables, and random processes and introduce models for random processes, which will be used in the following chapters for evaluating the efficiency of the described source coding algorithms. For further information on the theory of probability, random variables, and random processes, the interested reader is referred to [45, 60, 25].

Probability theory is a branch of mathematics, which concerns the description and modeling of random events. The basis for modern probability theory is the axiomatic definition of probability that was introduced by Kolmogorov in [45] using concepts from set theory.

We consider an experiment with an uncertain outcome, which

is called a random experiment. The union of all possible outcomes

ζ of the random experiment is referred to as the certain event or

sample space of the random experiment and is denoted by  . A

subset

. A

subset  of the sample space

of the sample space  is called an event. To each event

is called an event. To each event  a

measure P(

a

measure P( ) is assigned, which is referred to as the probability of the

event

) is assigned, which is referred to as the probability of the

event  . The measure of probability satisfies the following three

axioms:

. The measure of probability satisfies the following three

axioms:

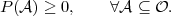

| (2.1) |

is equal to 1,

is equal to 1,

| (2.2) |

i : i = 0,1,

i : i = 0,1, } is a countable set of events

such that

} is a countable set of events

such that  i ∩

i ∩ j = ∅ for i≠j, then

j = ∅ for i≠j, then

| (2.3) |

In addition to the axioms, the notion of the independence of two events and the conditional probability are introduced:

i and

i and  j are independent if the probability of their

intersection is the product of their probabilities,

j are independent if the probability of their

intersection is the product of their probabilities,

| (2.4) |

i given another

event

i given another

event  j, with P(

j, with P( j) > 0, is denoted by P(

j) > 0, is denoted by P( i|

i| j) and is defined

as

j) and is defined

as

| (2.5) |

The definitions (2.4) and (2.5) imply that, if two events  i and

i and  j

are independent and P(

j

are independent and P( j) > 0, the conditional probability of the

event

j) > 0, the conditional probability of the

event  i given the event

i given the event  j is equal to the marginal probability

of

j is equal to the marginal probability

of  i,

i,

| (2.6) |

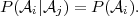

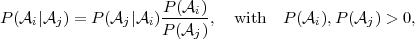

A direct consequence of the definition of conditional probability in (2.5) is Bayes’ theorem,

| (2.7) |

which described the interdependency of the conditional probabilities

P( i|

i| j) and P(

j) and P( j|

j| i) for two events

i) for two events  i and

i and  j.

j.

A concept that we will use throughout this text are random variables,

which will be denoted with upper-case letters. A random variable S is a

function of the sample space  that assigns a real value S(ζ) to each

outcome ζ ∈

that assigns a real value S(ζ) to each

outcome ζ ∈ of a random experiment.

of a random experiment.

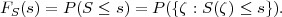

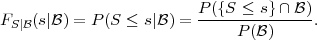

The cumulative distribution function (cdf) of a random variable S is denoted by FS(s) and specifies the probability of the event {S ≤s},

| (2.8) |

The cdf is a non-decreasing function with FS(-∞) = 0 and FS(∞) = 1.

The concept of defining a cdf can be extended to sets of two or more

random variables S = {S0, ,SN-1}. The function

,SN-1}. The function

| (2.9) |

is referred to as N-dimensional cdf, joint cdf, or joint distribution. A set S

of random variables is also referred to as a random vector and is also

denoted using the vector notation S = (S0, ,SN-1)T . For the

joint cdf of two random variables X and Y we will use the notation

FXY (x,y) = P(X ≤ x,Y ≤ y). The joint cdf of two random vectors X and

Y will be denoted by FXY (x,y) = P(X ≤ x,Y ≤ y).

,SN-1)T . For the

joint cdf of two random variables X and Y we will use the notation

FXY (x,y) = P(X ≤ x,Y ≤ y). The joint cdf of two random vectors X and

Y will be denoted by FXY (x,y) = P(X ≤ x,Y ≤ y).

The conditional cdf or conditional distribution of a random variable S

given an event  , with P(

, with P( ) > 0, is defined as the conditional probability

of the event {S ≤ s} given the event

) > 0, is defined as the conditional probability

of the event {S ≤ s} given the event  ,

,

| (2.10) |

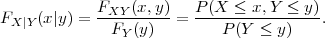

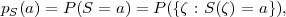

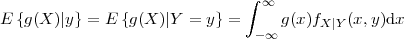

The conditional distribution of a random variable X given another random variable Y is denoted by FX|Y (x|y) and defined as

| (2.11) |

Similarly, the conditional cdf of a random vector X given another random vector Y is given by FX|Y (x|y) = FXY (x,y)∕FY (y).

2.2.1 Continuous Random Variables

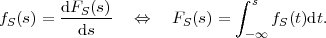

A random variable S is called a continuous random variable, if its cdf FS(s) is a continuous function. The probability P(S = s) is equal to zero for all values of s. An important function of continuous random variables is the probability density function (pdf), which is defined as the derivative of the cdf,

| (2.12) |

Since the cdf FS(s) is a monotonically non-decreasing function, the pdf fS(s) is greater than or equal to zero for all values of s. Important examples for pdf’s, which we will use later in this text, are given below.

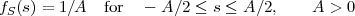

Uniform pdf:

| (2.13) |

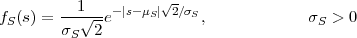

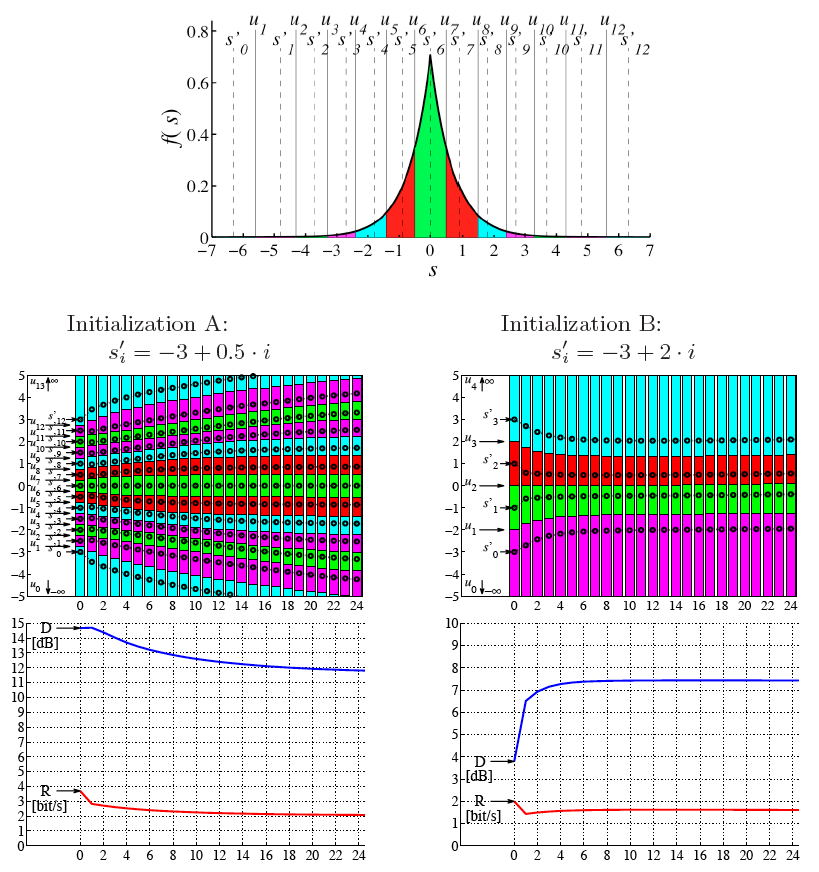

Laplacian pdf:

| (2.14) |

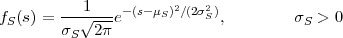

Gaussian pdf:

| (2.15) |

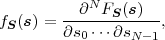

The concept of defining a probability density function is also extended to

random vectors S = (S0, ,SN-1)T . The multivariate derivative of the

joint cdf FS(s),

,SN-1)T . The multivariate derivative of the

joint cdf FS(s),

| (2.16) |

is referred to as the N-dimensional pdf, joint pdf, or joint density. For two random variables X and Y , we will use the notation fXY (x,y) for denoting the joint pdf of X and Y . The joint density of two random vectors X and Y will be denoted by fXY (x,y).

The conditional pdf or conditional density fS| (s|

(s| ) of a random

variable S given an event

) of a random

variable S given an event  , with P(

, with P( ) > 0, is defined as the derivative of

the conditional distribution FS|

) > 0, is defined as the derivative of

the conditional distribution FS| (s|

(s| ), fS|

), fS| (s|

(s| ) = dFS|

) = dFS| (s|

(s| )∕ds. The

conditional density of a random variable X given another random

variable Y is denoted by fX|Y (x|y) and defined as

)∕ds. The

conditional density of a random variable X given another random

variable Y is denoted by fX|Y (x|y) and defined as

| (2.17) |

Similarly, the conditional pdf of a random vector X given another random vector Y is given by fX|Y (x|y) = fXY (x,y)∕fY (y).

2.2.2 Discrete Random Variables

A random variable S is said to be a discrete random variable if its

cdf FS(s) represents a staircase function. A discrete random variable S can

only take values of a countable set  = {a0,a1,…}, which is called the

alphabet of the random variable. For a discrete random variable S with an

alphabet

= {a0,a1,…}, which is called the

alphabet of the random variable. For a discrete random variable S with an

alphabet  , the function

, the function

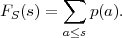

| (2.18) |

which gives the probabilities that S is equal to a particular alphabet letter, is referred to as probability mass function (pmf). The cdf FS(s) of a discrete random variable S is given by the sum of the probability masses p(a) with a ≤ s,

| (2.19) |

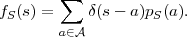

With the Dirac delta function δ it is also possible to use a pdf fS for describing the statistical properties of a discrete random variables S with a pmf pS(a),

| (2.20) |

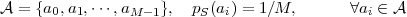

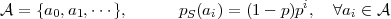

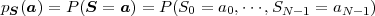

Examples for pmf’s that will be used in this text are listed below. The pmf’s are specified in terms of parameters p and M, where p is a real number in the open interval (0,1) and M is an integer greater than 1. The binary and uniform pmf are specified for discrete random variables with a finite alphabet, while the geometric pmf is specified for random variables with a countably infinite alphabet.

Binary pmf:

| (2.21) |

Uniform pmf:

| (2.22) |

Geometric pmf:

| (2.23) |

The pmf for a random vector S = (S0, ,SN-1)T is defined by

,SN-1)T is defined by

| (2.24) |

and is also referred to as N-dimensional pmf or joint pmf. The joint pmf for two random variables X and Y or two random vectors X and Y will be denoted by pXY (ax,ay) or pXY (ax,ay), respectively.

The conditional pmf pS| (a|

(a| ) of a random variable S given an event

) of a random variable S given an event  ,

with P(

,

with P( ) > 0, specifies the conditional probabilities of the events {S = a}

given the event B, pS|

) > 0, specifies the conditional probabilities of the events {S = a}

given the event B, pS| (a|

(a| ) = P(S = a|

) = P(S = a| ). The conditional pmf of a

random variable X given another random variable Y is denoted by

pX|Y (ax|ay) and defined as

). The conditional pmf of a

random variable X given another random variable Y is denoted by

pX|Y (ax|ay) and defined as

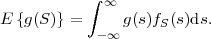

| (2.25) |

Similarly, the conditional pmf of a random vector X given another random vector Y is given by pX|Y (ax|ay) = pXY (ax,ay)∕pY (ay).

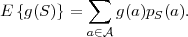

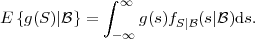

Statistical properties of random variables are often expressed using probabilistic averages, which are referred to as expectation values or expected values. The expectation value of an arbitrary function g(S) of a continuous random variable S is defined by the integral

| (2.26) |

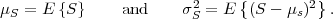

For discrete random variables S, it is defined as the sum

| (2.27) |

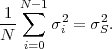

Two important expectation values are the mean μS and the variance σS2 of a random variable S, which are given by

| (2.28) |

For the following discussion of expectation values, we consider continuous random variables. For discrete random variables, the integrals have to be replaced by sums and the pdf’s have to be replaced by pmf’s.

The expectation value of a function g(S) of a set N random variables

S = {S0, ,SN-1} is given by

,SN-1} is given by

| (2.29) |

The conditional expectation value of a function g(S) of a random

variable S given an event  , with P(

, with P( ) > 0, is defined by

) > 0, is defined by

| (2.30) |

The conditional expectation value of a function g(X) of random variable X given a particular value y for another random variable Y is specified by

| (2.31) |

and represents a deterministic function of the value y. If the value y is

replaced by the random variable Y , the expression E specifies a

new random variable that is a function of the random variable Y . The

expectation value E

specifies a

new random variable that is a function of the random variable Y . The

expectation value E of a random variable Z = E

of a random variable Z = E can be

computed using the iterative expectation rule,

can be

computed using the iterative expectation rule,

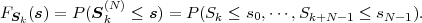

We now consider a series of random experiments that are performed at time instants tn, with n being an integer greater than or equal to 0. The outcome of each random experiment at a particular time instant tn is characterized by a random variable Sn = S(tn). The series of random variables S = {Sn} is called a discrete-time1 random process. The statistical properties of a discrete-time random process S can be characterized by the N-th order joint cdf

| (2.33) |

Random processes S that represent a series of continuous random variables Sn are called continuous random processes and random processes for which the random variables Sn are of discrete type are referred to as discrete random processes. For continuous random processes, the statistical properties can also be described by the N-th order joint pdf, which is given by the multivariate derivative

| (2.34) |

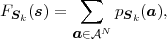

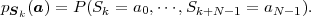

For discrete random processes, the N-th order joint cdf FSk(s) can also be specified using the N-th order joint pmf,

| (2.35) |

where  N represent the product space of the alphabets

N represent the product space of the alphabets  n for the random

variables Sn with n = k,

n for the random

variables Sn with n = k, ,k + N - 1 and

,k + N - 1 and

| (2.36) |

represents the N-th order joint pmf.

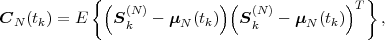

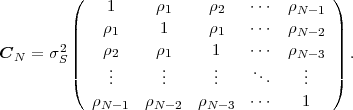

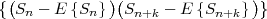

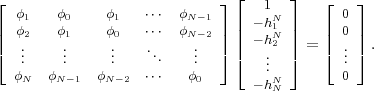

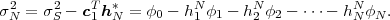

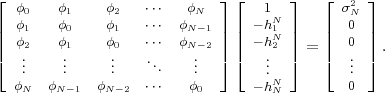

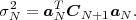

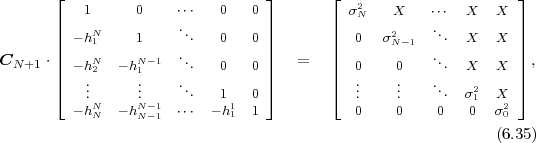

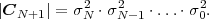

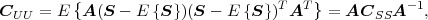

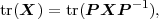

The statistical properties of random processes S = {Sn} are often characterized by an N-th order autocovariance matrix CN(tk) or an N-th order autocorrelation matrix RN(tk). The N-th order autocovariance matrix is defined by

| (2.37) |

where Sk(N) represents the vector (Sk, ,Sk+N-1)T of N successive

random variables and μN(tk) = E

,Sk+N-1)T of N successive

random variables and μN(tk) = E Sk(N)

Sk(N) is the N-th order mean. The

N-th order autocorrelation matrix is defined by

is the N-th order mean. The

N-th order autocorrelation matrix is defined by

| (2.38) |

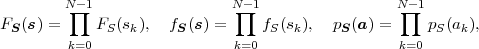

A random process is called stationary if its statistical properties are invariant to a shift in time. For stationary random processes, the N-th order joint cdf FSk(s), pdf fSk(s), and pmf pSk(a) are independent of the first time instant tk and are denoted by FS(s), fS(s), and pS(a), respectively. For the random variables Sn of stationary processes we will often omit the index n and use the notation S.

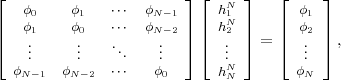

For stationary random processes, the N-th order mean, the N-th order

autocovariance matrix, and the N-th order autocorrelation matrix are

independent of the time instant tk and are denoted by μN, CN, and RN,

respectively. The N-th order mean μN is a vector with all N elements being

equal to the mean μS of the random variables S. The N-th order

autocovariance matrix CN = E (S(N) -μN)(S(N) -μN)T

(S(N) -μN)(S(N) -μN)T  is a

symmetric Toeplitz matrix,

is a

symmetric Toeplitz matrix,

| (2.39) |

A Toepliz matrix is a matrix with constant values along all descending

diagonals from left to right. For information on the theory and application

of Toeplitz matrices the reader is referred to the standard reference [29]

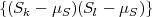

and the tutorial [23]. The (k,l)-th element of the autocovariance matrix

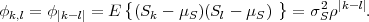

CN is given by the autocovariance function ϕk,l = E .

For stationary processes, the autocovariance function depends only on the

absolute values |k - l| and can be written as ϕk,l = ϕ|k-l| = σS2ρ|k-l|. The

N-th order autocorrelation matrix RN is also is symmetric Toeplitz matrix.

The (k,l)-th element of RN is given by rk,l = ϕk,l + μS2.

.

For stationary processes, the autocovariance function depends only on the

absolute values |k - l| and can be written as ϕk,l = ϕ|k-l| = σS2ρ|k-l|. The

N-th order autocorrelation matrix RN is also is symmetric Toeplitz matrix.

The (k,l)-th element of RN is given by rk,l = ϕk,l + μS2.

A random process S = {Sn} for which the random variables Sn are

independent is referred to as memoryless random process. If a memoryless

random process is additionally stationary it is also said to be independent

and identical distributed (iid), since the random variables Sn are

independent and their cdf’s FSn(s) = P(Sn ≤ s) do not depend on the time

instant tn. The N-th order cdf FS(s), pdf fS(s), and pmf pS(a) for iid

processes, with s = (s0, ,sN-1)T and a = (a0,

,sN-1)T and a = (a0, ,aN-1)T , are given by

the products

,aN-1)T , are given by

the products

| (2.40) |

where FS(s), fS(s), and pS(a) are the marginal cdf, pdf, and pmf, respectively, for the random variables Sn.

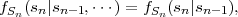

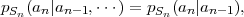

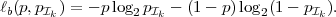

A Markov process is characterized by the property that future outcomes do not depend on past outcomes, but only on the present outcome,

| (2.41) |

This property can also be expressed in terms of the pdf,

| (2.42) |

for continuous random processes, or in terms of the pmf,

| (2.43) |

for discrete random processes,

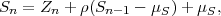

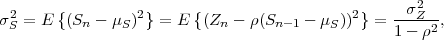

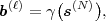

Given a continuous zero-mean iid process Z = {Zn}, a stationary continuous Markov process S = {Sn} with mean μS can be constructed by the recursive rule

| (2.44) |

where ρ, with |ρ| < 1, represents the correlation coefficient between successive random variables Sn-1 and Sn. Since the random variables Zn are independent, a random variable Sn only depends on the preceding random variable Sn-1. The variance σS2 of the stationary Markov process S is given by

| (2.45) |

where σZ2 = E denotes the variance of the zero-mean iid process Z.

The autocovariance function of the process S is given by

denotes the variance of the zero-mean iid process Z.

The autocovariance function of the process S is given by

| (2.46) |

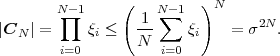

Each element ϕk,l of the N-th order autocorrelation matrix CN represents a non-negative integer power of the correlation coefficient ρ.

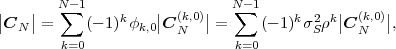

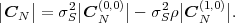

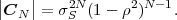

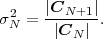

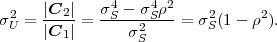

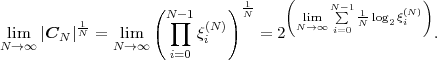

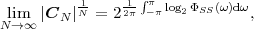

In following chapters, we will often obtain expressions that depend on the determinant |CN| of the N-th order autocovariance matrix CN. For stationary continuous Markov processes given by (2.44), the determinant |CN| can be expressed by a simple relationship. Using Laplace’s formula, we can expand the determinant of the N-th order autocovariance matrix along the first column,

| (2.47) |

where CN(k,l) represents the matrix that is obtained by removing the k-th row and l-th column from CN. The first row of each matrix CN(k,l), with k > 1, is equal to the second row of the same matrix multiplied by the correlation coefficient ρ. Hence, the first two rows of these matrices are linearly dependent and the determinants |CN(k,l)|, with k > 1, are equal to 0. Thus, we obtain

| (2.48) |

The matrix CN(0,0) represents the autocovariance matrix CN-1 of the order (N - 1). The matrix CN(1,0) is equal to CN-1 except that the first row is multiplied by the correlation coefficient ρ. Hence, the determinant |CN(1,0)| is equal to ρ|CN-1|, which yields the recursive rule

| (2.49) |

By using the expression |C1| = σS2 for the determinant of the 1-st order autocovariance matrix, we obtain the relationship

| (2.50) |

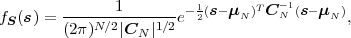

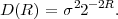

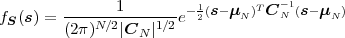

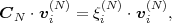

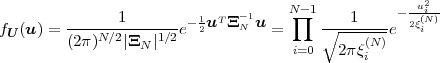

A continuous random process S = {Sn} is said to be a Gaussian process if all finite collections of random variables Sn represent Gaussian random vectors. The N-th order pdf of a stationary Gaussian process S with mean μS and variance σS2 is given by

| (2.51) |

where s is a vector of N consecutive samples, μN is the N-th order mean (a vector with all N elements being equal to the mean μS), and CN is an N-th order nonsingular autocovariance matrix given by (2.39).

A continuous random process is called a Gauss-Markov process if it satisfies the requirements for both Gaussian processes and Markov processes. The statistical properties of a stationary Gauss-Markov are completely specified by its mean μS, its variance σS2, and its correlation coefficient ρ. The stationary continuous process in (2.44) is a stationary Gauss-Markov process if the random variables Zn of the zero-mean iid process Z have a Gaussian pdf fZ(s).

The N-th order pdf of a stationary Gauss-Markov process S with the mean μS, the variance σS2, and the correlation coefficient ρ is given by (2.51), where the elements ϕk,l of the N-th order autocovariance matrix CN depend on the variance σS2 and the correlation coefficient ρ and are given by (2.46). The determinant |CN| of the N-th order autocovariance matrix of a stationary Gauss-Markov process can be written according to (2.50).

2.4 Summary of Random Processes

In this chapter, we gave a brief review of the concepts of random variables and random processes. A random variable is a function of the sample space of a random experiment. It assigns a real value to each possible outcome of the random experiment. The statistical properties of random variables can be characterized by cumulative distribution functions (cdf’s), probability density functions (pdf’s), probability mass functions (pmf’s), or expectation values.

Finite collections of random variables are called random vectors. A countably infinite sequence of random variables is referred to as (discrete-time) random process. Random processes for which the statistical properties are invariant to a shift in time are called stationary processes. If the random variables of a process are independent, the process is said to be memoryless. Random processes that are stationary and memoryless are also referred to as independent and identically distributed (iid) processes. Important models for random processes, which will also be used in this text, are Markov processes, Gaussian processes, and Gauss-Markov processes.

Beside reviewing the basic concepts of random variables and random processes, we also introduced the notations that will be used throughout the text. For simplifying formulas in the following chapters, we will often omit the subscripts that characterize the random variable(s) or random vector(s) in the notations of cdf’s, pdf’s, and pmf’s.

This is the html version of the book:

Thomas Wiegand and Heiko Schwarz (2010): "Source Coding: Part I of Fundamentals of Source and Video Coding", Now Publishers, Foundations and Trends® in Signal Processing: Vol. 4: No 1-2, pp 1-222.

3 Lossless Source Coding

Lossless source coding describes a reversible mapping of sequences of discrete source symbols into sequences of codewords. In contrast to lossy coding techniques, the original sequence of source symbols can be exactly reconstructed from the sequence of codewords. Lossless coding is also referred to as noiseless coding or entropy coding. If the original signal contains statistical properties or dependencies that can be exploited for data compression, lossless coding techniques can provide a reduction in transmission rate. Basically all source codecs, and in particular all video codecs, include a lossless coding part by which the coding symbols are efficiently represented inside a bitstream.

In this chapter, we give an introduction to lossless source coding. We analyze the requirements for unique decodability, introduce a fundamental bound for the minimum average codeword length per source symbol that can be achieved with lossless coding techniques, and discuss various lossless source codes with respect to their efficiency, applicability, and complexity. For further information on lossless coding techniques, the reader is referred to the overview of lossless compression techniques in [67].

3.1 Classification of Lossless Source Codes

In this text, we restrict our considerations to the practically important case

of binary codewords. A codeword is a sequence of binary symbols (bits)

of the alphabet  = {0,1}. Let S = {Sn} be a stochastic process

that generates sequences of discrete source symbols. The source

symbols sn are realizations of the random variables Sn. By the process of

lossless coding, a message s(L) = {s0,

= {0,1}. Let S = {Sn} be a stochastic process

that generates sequences of discrete source symbols. The source

symbols sn are realizations of the random variables Sn. By the process of

lossless coding, a message s(L) = {s0, ,sL-1} consisting of L source

symbols is converted into a sequence b(K) = {b0,

,sL-1} consisting of L source

symbols is converted into a sequence b(K) = {b0, ,bK-1} of K

bits.

,bK-1} of K

bits.

In practical coding algorithms, a message s(L) is often split into blocks

s(N) = {sn, ,sn+N-1} of N symbols, with 1 ≤ N ≤ L, and a codeword

b(ℓ)(s(N)) = {b0,

,sn+N-1} of N symbols, with 1 ≤ N ≤ L, and a codeword

b(ℓ)(s(N)) = {b0, ,bℓ-1} of ℓ bits is assigned to each of these blocks s(N).

The length ℓ of a codeword bℓ(s(N)) can depend on the symbol block s(N).

The codeword sequence b(K) that represents the message s(L) is

obtained by concatenating the codewords bℓ(s(N)) for the symbol

blocks s(N). A lossless source code can be described by the encoder

mapping

,bℓ-1} of ℓ bits is assigned to each of these blocks s(N).

The length ℓ of a codeword bℓ(s(N)) can depend on the symbol block s(N).

The codeword sequence b(K) that represents the message s(L) is

obtained by concatenating the codewords bℓ(s(N)) for the symbol

blocks s(N). A lossless source code can be described by the encoder

mapping

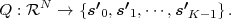

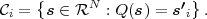

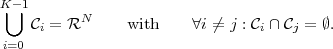

| (3.1) |

which specifies a mapping from the set of finite length symbol blocks to the set of finite length binary codewords. The decoder mapping

| (3.2) |

is the inverse of the encoder mapping γ.

Depending on whether the number N of symbols in the blocks s(N) and the number ℓ of bits for the associated codewords are fixed or variable, the following categories can be distinguished:

3.2 Variable-Length Coding for Scalars

In this section, we consider lossless source codes that assign a separate

codeword to each symbol sn of a message s(L). It is supposed that the

symbols of the message s(L) are generated by a stationary discrete random

process S = {Sn}. The random variables Sn = S are characterized by a

finite11 The fundamental concepts and results shown in this section are also valid for countably infinite

symbol alphabets (M →∞).

symbol alphabet  = {a0,

= {a0, ,aM-1} and a marginal pmf p(a) = P(S = a).

The lossless source code associates each letter ai of the alphabet

,aM-1} and a marginal pmf p(a) = P(S = a).

The lossless source code associates each letter ai of the alphabet  with a

binary codeword bi = {b0i,

with a

binary codeword bi = {b0i, ,bℓ(ai)-1i} of a length ℓ(ai) ≥ 1. The

goal of the lossless code design is to minimize the average codeword

length

,bℓ(ai)-1i} of a length ℓ(ai) ≥ 1. The

goal of the lossless code design is to minimize the average codeword

length

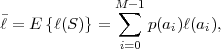

| (3.3) |

while ensuring that each message s(L) is uniquely decodable given their coded representation b(K).

A code is said to be uniquely decodable if and only if each valid coded representation b(K) of a finite number K of bits can be produced by only one possible sequence of source symbols s(L).

A necessary condition for unique decodability is that each letter ai of

the symbol alphabet  is associated with a different codeword. Codes

with this property are called non-singular codes and ensure that a

single source symbol is unambiguously represented. But if messages

with more than one symbol are transmitted, non-singularity is not

sufficient to guarantee unique decodability, as will be illustrated in the

following.

is associated with a different codeword. Codes

with this property are called non-singular codes and ensure that a

single source symbol is unambiguously represented. But if messages

with more than one symbol are transmitted, non-singularity is not

sufficient to guarantee unique decodability, as will be illustrated in the

following.

Table 3.1 shows five example codes for a source with a four letter alphabet and a given marginal pmf. Code A has the smallest average codeword length, but since the symbols a2 and a3 cannot be distinguished2. Code A is a singular code and is not uniquely decodable. Although code B is a non-singular code, it is not uniquely decodable either, since the concatenation of the letters a1 and a0 produces the same bit sequence as the letter a2. The remaining three codes are uniquely decodable, but differ in other properties. While code D has an average codeword length of 2.125 bit per symbol, the codes C and E have an average codeword length of only 1.75 bit per symbol, which is, as we will show later, the minimum achievable average codeword length for the given source. Beside being uniquely decodable, the codes D and E are also instantaneously decodable, i.e., each alphabet letter can be decoded right after the bits of its codeword are received. The code C does not have this property. If a decoder for the code C receives a bit equal to 0, it has to wait for the next bit equal to 0 before a symbol can be decoded. Theoretically, the decoder might need to wait until the end of the message. The value of the next symbol depends on how many bits equal to 1 are received between the zero bits.

Binary codes can be represented using binary trees as illustrated in Fig. 3.1. A binary tree is a data structure that consists of nodes, with each node having zero, one, or two descendant nodes. A node and its descendants nodes are connected by branches. A binary tree starts with a root node, which is the only node that is not a descendant of any other node. Nodes that are not the root node but have descendants are referred to as interior nodes, whereas nodes that do not have descendants are called terminal nodes or leaf nodes.

In a binary code tree, all branches are labeled with ‘0’ or ‘1’. If two

branches depart from the same node, they have different labels. Each node

of the tree represents a codeword, which is given by the concatenation of

the branch labels from the root node to the considered node. A

code for a given alphabet  can be constructed by associating all

terminal nodes and zero or more interior nodes of a binary code

tree with one or more alphabet letters. If each alphabet letter is

associated with a distinct node, the resulting code is non-singular. In the

example of Fig. 3.1, the nodes that represent alphabet letters are

filled.

can be constructed by associating all

terminal nodes and zero or more interior nodes of a binary code

tree with one or more alphabet letters. If each alphabet letter is

associated with a distinct node, the resulting code is non-singular. In the

example of Fig. 3.1, the nodes that represent alphabet letters are

filled.

A code is said to be a prefix code if no codeword for an alphabet letter represents the codeword or a prefix of the codeword for any other alphabet letter. If a prefix code is represented by a binary code tree, this implies that each alphabet letter is assigned to a distinct terminal node, but not to any interior node. It is obvious that every prefix code is uniquely decodable. Furthermore, we will prove later that for every uniquely decodable code there exists a prefix code with exactly the same codeword lengths. Examples for prefix codes are the codes D and E in Table 3.1.

Based on the binary code tree representation the parsing rule for prefix codes can be specified as follows:

The parsing rule reveals that prefix codes are not only uniquely decodable, but also instantaneously decodable. As soon as all bits of a codeword are received, the transmitted symbol is immediately known. Due to this property, it is also possible to switch between different independently designed prefix codes inside a bitstream (i.e., because symbols with different alphabets are interleaved according to a given bitstream syntax) without impacting the unique decodability.

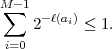

A necessary condition for uniquely decodable codes is given by the Kraft inequality,

| (3.4) |

For proving this inequality, we consider the term

| (3.5) |

The term ℓL = ℓ(ai0) + ℓ(ai1) +  + ℓ(aiL-1) represents the combined

codeword length for coding L symbols. Let A(ℓL) denote the number

of distinct symbol sequences that produce a bit sequence with the

same length ℓL. A(ℓL) is equal to the number of terms 2-ℓL that are

contained in the sum of the right side of (3.5). For a uniquely decodable

code, A(ℓL) must be less than or equal to 2ℓL, since there are only

2ℓL distinct bit sequences of length ℓL. If the maximum length of a

codeword is ℓmax, the combined codeword length ℓL lies inside the

interval [L,L ⋅ ℓmax]. Hence, a uniquely decodable code must fulfill the

inequality

+ ℓ(aiL-1) represents the combined

codeword length for coding L symbols. Let A(ℓL) denote the number

of distinct symbol sequences that produce a bit sequence with the

same length ℓL. A(ℓL) is equal to the number of terms 2-ℓL that are

contained in the sum of the right side of (3.5). For a uniquely decodable

code, A(ℓL) must be less than or equal to 2ℓL, since there are only

2ℓL distinct bit sequences of length ℓL. If the maximum length of a

codeword is ℓmax, the combined codeword length ℓL lies inside the

interval [L,L ⋅ ℓmax]. Hence, a uniquely decodable code must fulfill the

inequality

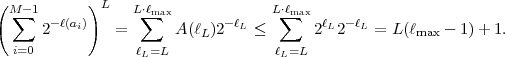

| (3.6) |

The left side of this inequality grows exponentially with L, while the right side grows only linearly with L. If the Kraft inequality (3.4) is not fulfilled, we can always find a value of L for which the condition (3.6) is violated. And since the constraint (3.6) must be obeyed for all values of L ≥ 1, this proves that the Kraft inequality specifies a necessary condition for uniquely decodable codes.

The Kraft inequality does not only provide a necessary condition for

uniquely decodable codes, it is also always possible to construct a uniquely

decodable code for any given set of codeword lengths {ℓ0,ℓ1, ,ℓM-1} that

satisfies the Kraft inequality. We prove this statement for prefix codes,

which represent a subset of uniquely decodable codes. Without loss of

generality, we assume that the given codeword lengths are ordered as

ℓ0 ≤ ℓ1 ≤

,ℓM-1} that

satisfies the Kraft inequality. We prove this statement for prefix codes,

which represent a subset of uniquely decodable codes. Without loss of

generality, we assume that the given codeword lengths are ordered as

ℓ0 ≤ ℓ1 ≤ ≤ ℓM-1. Starting with an infinite binary code tree, we

chose an arbitrary node of depth ℓ0 (i.e., a node that represents a

codeword of length ℓ0) for the first codeword and prune the code tree at

this node. For the next codeword length ℓ1, one of the remaining

nodes with depth ℓ1 is selected. A continuation of this procedure

yields a prefix code for the given set of codeword lengths, unless we

cannot select a node for a codeword length ℓi because all nodes of

depth ℓi have already been removed in previous steps. It should be

noted that the selection of a codeword of length ℓk removes 2ℓi-ℓk

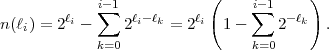

codewords with a length of ℓi ≥ ℓk. Consequently, for the assignment of

a codeword length ℓi, the number of available codewords is given

by

≤ ℓM-1. Starting with an infinite binary code tree, we

chose an arbitrary node of depth ℓ0 (i.e., a node that represents a

codeword of length ℓ0) for the first codeword and prune the code tree at

this node. For the next codeword length ℓ1, one of the remaining

nodes with depth ℓ1 is selected. A continuation of this procedure

yields a prefix code for the given set of codeword lengths, unless we

cannot select a node for a codeword length ℓi because all nodes of

depth ℓi have already been removed in previous steps. It should be

noted that the selection of a codeword of length ℓk removes 2ℓi-ℓk

codewords with a length of ℓi ≥ ℓk. Consequently, for the assignment of

a codeword length ℓi, the number of available codewords is given

by

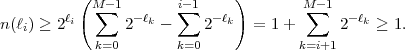

| (3.7) |

If the Kraft inequality (3.4) is fulfilled, we obtain

| (3.8) |

Hence, it is always possible to construct a prefix code, and thus a uniquely decodable code, for a given set of codeword lengths that satisfies the Kraft inequality.

The proof shows another important property of prefix codes. Since all uniquely decodable codes fulfill the Kraft inequality and it is always possible to construct a prefix code for any set of codeword lengths that satisfies the Kraft inequality, there do not exist uniquely decodable codes that have a smaller average codeword length than the best prefix code. Due to this property and since prefix codes additionally provide instantaneous decodability and are easy to construct, all variable length codes that are used in practice are prefix codes.

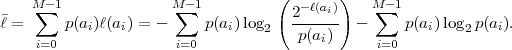

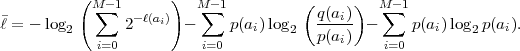

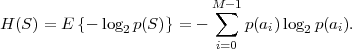

Based on the Kraft inequality, we now derive a lower bound for the average codeword length of uniquely decodable codes. The expression (3.3) for the average codeword length can be rewritten as

| (3.9) |

With the definition q(ai) = 2-ℓ(ai)∕ , we obtain

, we obtain

| (3.10) |

Since the Kraft inequality is fulfilled for all uniquely decodable codes, the first term on the right side of (3.10) is greater than or equal to 0. The second term is also greater than or equal to 0 as can be shown using the inequality lnx ≤ x - 1 (with equality if and only if x = 1),

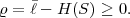

The inequality (3.11) is also referred to as divergence inequality for probability mass functions. The average codeword length for uniquely decodable codes is bounded by

| (3.12) |

with

| (3.13) |

The lower bound H(S) is called the entropy of the random variable S and does only depend on the associated pmf p. Often the entropy of a random variable with a pmf p is also denoted as H(p). The redundancy of a code is given by the difference

| (3.14) |

The entropy H(S) can also be considered as a measure for the uncertainty3 that is associated with the random variable S.

The inequality (3.12) is an equality if and only if the first and second

term on the right side of (3.10) are equal to 0. This is only the case

if the Kraft inequality is fulfilled with equality and q(ai) = p(ai),

∀ai ∈ . The resulting conditions ℓ(ai) = -log 2p(ai), ∀ai ∈

. The resulting conditions ℓ(ai) = -log 2p(ai), ∀ai ∈ , can only

hold if all alphabet letters have probabilities that are integer powers

of 1∕2.

, can only

hold if all alphabet letters have probabilities that are integer powers

of 1∕2.

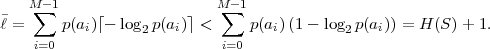

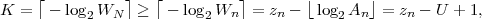

For deriving an upper bound for the minimum average codeword length

we choose ℓ(ai) = ⌈-log 2p(ai)⌉, ∀ai ∈ , where ⌈x⌉ represents the smallest

integer greater than or equal to x. Since these codeword lengths satisfy the

Kraft inequality, as can be shown using ⌈x⌉≥ x,

, where ⌈x⌉ represents the smallest

integer greater than or equal to x. Since these codeword lengths satisfy the

Kraft inequality, as can be shown using ⌈x⌉≥ x,

| (3.15) |

we can always construct a uniquely decodable code. For the average codeword length of such a code, we obtain, using ⌈x⌉ < x + 1,

| (3.16) |

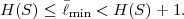

The minimum average codeword length min that can be achieved with uniquely decodable codes that assign a separate codeword to each letter of an alphabet always satisfies the inequality

| (3.17) |

The upper limit is approached for a source with a two-letter alphabet and a pmf {p,1 - p} if the letter probability p approaches 0 or 1 [15].

For deriving an upper bound for the minimum average codeword

length we chose ℓ(ai) = ⌈-log 2p(ai)⌉, ∀ai ∈ . The resulting code

has a redundancy ϱ = - H(Sn) that is always less than 1 bit per

symbol, but it does not necessarily achieve the minimum average

codeword length. For developing an optimal uniquely decodable code,

i.e., a code that achieves the minimum average codeword length,

it is sufficient to consider the class of prefix codes, since for every

uniquely decodable code there exists a prefix code with the exactly

same codeword length. An optimal prefix code has the following

properties:

. The resulting code

has a redundancy ϱ = - H(Sn) that is always less than 1 bit per

symbol, but it does not necessarily achieve the minimum average

codeword length. For developing an optimal uniquely decodable code,

i.e., a code that achieves the minimum average codeword length,

it is sufficient to consider the class of prefix codes, since for every

uniquely decodable code there exists a prefix code with the exactly

same codeword length. An optimal prefix code has the following

properties:

with p(ai) > p(aj), the

associated codeword lengths satisfy ℓ(ai) ≤ℓ(aj).

with p(ai) > p(aj), the

associated codeword lengths satisfy ℓ(ai) ≤ℓ(aj).

These conditions can be proved as follows. If the first condition is not fulfilled, an exchange of the codewords for the symbols ai and aj would decrease the average codeword length while preserving the prefix property. And if the second condition is not satisfied, i.e., if for a particular codeword with the maximum codeword length there does not exist a codeword that has the same length and differs only in the final bit, the removal of the last bit of the particular codeword would preserve the prefix property and decrease the average codeword length.

Both conditions for optimal prefix codes are obeyed if two codewords

with the maximum length that differ only in the final bit are assigned

to the two letters ai and aj with the smallest probabilities. In the

corresponding binary code tree, a parent node for the two leaf nodes that

represent these two letters is created. The two letters ai and aj can then be

treated as a new letter with a probability of p(ai) + p(aj) and the procedure

of creating a parent node for the nodes that represent the two letters with

the smallest probabilities can be repeated for the new alphabet. The

resulting iterative algorithm was developed and proved to be optimal by

Huffman in [30]. Based on the construction of a binary code tree, the

Huffman algorithm for a given alphabet  with a marginal pmf p can be

summarized as follows:

with a marginal pmf p can be

summarized as follows:

Adetailed example for the application of the Huffman algorithm is given in Fig. 3.2. Optimal prefix codes are often generally referred to as Huffman codes. It should be noted that there exist multiple optimal prefix codes for a given marginal pmf. A tighter bound than in (3.17) on the redundancy of Huffman codes is provided in [15].

3.2.4 Conditional Huffman Codes

Until now, we considered the design of variable length codes for the marginal pmf of stationary random processes. However, for random processes {Sn} with memory, it can be beneficial to design variable length codes for conditional pmfs and switch between multiple codeword tables depending on already coded symbols.

| a | a0 | a1 | a2 | entropy

|

| p(a|a0) | 0.90 | 0.05 | 0.05 | H(Sn|a0) = 0.5690 |

| p(a|a1) | 0.15 | 0.80 | 0.05 | H(Sn|a1) = 0.8842 |

| p(a|a2) | 0.25 | 0.15 | 0.60 | H(Sn|a2) = 1.3527 |

| p(a) | 0.64 | 0.24 | 0.1 | H(S) = 1.2575 |

As an example, we consider a stationary discrete Markov process with a

three symbol alphabet  = {a0,a1,a2}. The statistical properties of

this process are completely characterized by three conditional pmfs

p(a|ak) = P(Sn = a|Sn-1 = ak) with k = 0,1,2, which are given in

Table 3.2. An optimal prefix code for a given conditional pmf can be

designed in exactly the same way as for a marginal pmf. A corresponding

Huffman code design for the example Markov source is shown in Table 3.3.

For comparison, Table 3.3 lists also a Huffman code for the marginal

pmf. The codeword table that is chosen for coding a symbol sn

depends on the value of the preceding symbol sn-1. It is important to

note that an independent code design for the conditional pmfs is

only possible for instantaneously decodable codes, i.e., for prefix

codes.

= {a0,a1,a2}. The statistical properties of

this process are completely characterized by three conditional pmfs

p(a|ak) = P(Sn = a|Sn-1 = ak) with k = 0,1,2, which are given in

Table 3.2. An optimal prefix code for a given conditional pmf can be

designed in exactly the same way as for a marginal pmf. A corresponding

Huffman code design for the example Markov source is shown in Table 3.3.

For comparison, Table 3.3 lists also a Huffman code for the marginal

pmf. The codeword table that is chosen for coding a symbol sn

depends on the value of the preceding symbol sn-1. It is important to

note that an independent code design for the conditional pmfs is

only possible for instantaneously decodable codes, i.e., for prefix

codes.

|

ai | Huffman codes for conditional pmfs |

|

||||

| Sn-1 = a0 | Sn-1 = a2 | Sn-1 = a2 | ||||

| a 0 | 1 | 00 | 00 | 1 | ||

| a 1 | 00 | 1 | 01 | 00 | ||

| a 2 | 01 | 01 | 1 | 01 | ||

| 1.1 | 1.2 | 1.4 | 1.3556 | |||

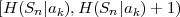

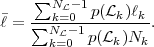

The average codeword length k = (Sn-1 = ak) of an optimal prefix

code for each of the conditional pmfs is guaranteed to lie in the half-open

interval  , where

, where

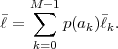

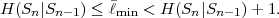

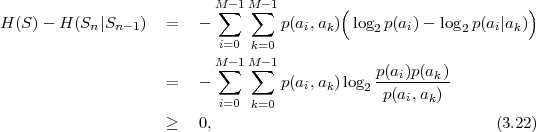

| (3.18) |

denotes the conditional entropy of the random variable Sn given the event {Sn-1 = ak}. The resulting average codeword length for the conditional code is

| (3.19) |

The resulting lower bound for the average codeword length is referred to as the conditional entropy H(Sn|Sn-1) of the random variable Sn assuming the random variable Sn-1 and is given by

where p(ai,ak) = P(Sn = ai,Sn-1 = ak) denotes the joint pmf of the random variables Sn and Sn-1. The conditional entropy H(Sn|Sn-1) specifies a measure for the uncertainty about Sn given the value of Sn-1. The minimum average codeword length min that is achievable with the conditional code design is bounded by

| (3.21) |

As can be easily shown from the divergence inequality (3.11),

, i.e., if the stationary process S is an iid process.

, i.e., if the stationary process S is an iid process.

For our example, the average codeword length of the conditional code design is 1.1578 bit per symbol, which is about 14.6% smaller than the average codeword length of the Huffman code for the marginal pmf.

For sources with memory that do not satisfy the Markov property, it can be possible to further decrease the average codeword length if more than one preceding symbol is used in the condition. However, the number of codeword tables increases exponentially with the number of considered symbols. To reduce the number of tables, the number of outcomes for the condition can be partitioned into a small number of events, and for each of these events, a separate code can be designed. As an application example, the CAVLC design in the H.264/AVC video coding standard [36] includes conditional variable length codes.

In practice, the marginal and conditional pmfs of a source are usually not known and sources are often nonstationary. Conceptually, the pmf(s) can be simultaneously estimated in encoder and decoder and a Huffman code can be redesigned after coding a particular number of symbols. This would, however, tremendously increase the complexity of the coding process. A fast algorithm for adapting Huffman codes was proposed by Gallager [15]. But even this algorithm is considered as too complex for video coding application, so that adaptive Huffman codes are rarely used in this area.

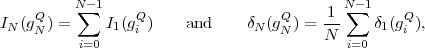

3.3 Variable-Length Coding for Vectors

Although scalar Huffman codes achieve the smallest average codeword length among all uniquely decodable codes that assign a separate codeword to each letter of an alphabet, they can be very inefficient if there are strong dependencies between the random variables of a process. For sources with memory, the average codeword length per symbol can be decreased if multiple symbols are coded jointly. Huffman codes that assign a codeword to a block of two or more successive symbols are referred to as block Huffman codes or vector Huffman codes and represent an alternative to conditional Huffman codes4. The joint coding of multiple symbols is also advantageous for iid processes for which one of the probabilities masses is close to one.

3.3.1 Huffman Codes for Fixed-Length Vectors

We consider stationary discrete random sources S = {Sn} with an

M-ary alphabet  = {a0,

= {a0, ,aM-1}. If N symbols are coded jointly, the

Huffman code has to be designed for the joint pmf

,aM-1}. If N symbols are coded jointly, the

Huffman code has to be designed for the joint pmf

of a block of N successive symbols. The average codeword length min per symbol for an optimum block Huffman code is bounded by

| (3.23) |

where

| (3.24) |

is referred to as the block entropy for a set of N successive random variables

{Sn, ,Sn+N-1}. The limit

,Sn+N-1}. The limit

| (3.25) |

is called the entropy rate of a source S. It can be shown that the limit in (3.25) always exists for stationary sources [14]. The entropy rate (S) represents the greatest lower bound for the average codeword length per symbol that can be achieved with lossless source coding techniques,

| (3.26) |

For iid processes, the entropy rate

is equal to the marginal entropy H(S). For stationary Markov processes, the entropy rate

| aiak | p(ai,ak) | codewords

| |

| a0a0 | 0.58 | 1 | |

| a0a1 | 0.032 | 00001 | |

| a0a2 | 0.032 | 00010 | |

| a1a0 | 0.036 | 0010 | |

| a1a1 | 0.195 | 01 | |

| a1a2 | 0.012 | 000000 | |

| a2a0 | 0.027 | 00011 | |

| a2a1 | 0.017 | 000001 | |

| (a) | a2a2 | 0.06 | 0011 |

| N | N |

||

| 1 | 1.3556 | 3 | |

| 2 | 1.0094 | 9 | |

| 3 | 0.9150 | 27 | |

| 4 | 0.8690 | 81 | |

| 5 | 0.8462 | 243 | |

| 6 | 0.8299 | 729 | |

| 7 | 0.8153 | 2187 | |

| 8 | 0.8027 | 6561 | |

| (b) | 9 | 0.7940 | 19683 |

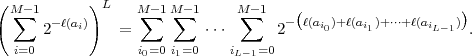

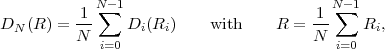

As an example for the design of block Huffman codes, we consider the discrete Markov process specified in Table 3.2. The entropy rate (S) for this source is 0.7331 bit per symbol. Table 3.4(a) shows a Huffman code for the joint coding of 2 symbols. The average codeword length per symbol for this code is 1.0094 bit per symbol, which is smaller than the average codeword length obtained with the Huffman code for the marginal pmf and the conditional Huffman code that we developed in sec. 3.2. As shown in Table 3.4(b), the average codeword length can be further reduced by increasing the number N of jointly coded symbols. If N approaches infinity, the average codeword length per symbol for the block Huffman code approaches the entropy rate. However, the number NC of codewords that must be stored in an encoder and decoder grows exponentially with the number N of jointly coded symbols. In practice, block Huffman codes are only used for a small number of symbols with small alphabets.

In general, the number of symbols in a message is not a multiple of the block size N. The last block of source symbols may contain less than N symbols, and, in that case, it cannot be represented with the block Huffman code. If the number of symbols in a message is known to the decoder (e.g., because it is determined by a given bitstream syntax), an encoder can send the codeword for any of the letter combinations that contain the last block of source symbols as a prefix. At the decoder side, the additionally decoded symbols are discarded. If the number of symbols that are contained in a message cannot be determined in the decoder, a special symbol for signaling the end of a message can be added to the alphabet.

3.3.2 Huffman Codes for Variable-Length Vectors

An additional degree of freedom for designing Huffman codes, or generally variable-length codes, for symbol vectors is obtained if the restriction that all codewords are assigned to symbol blocks of the same size is removed. Instead, the codewords can be assigned to sequences of a variable number of successive symbols. Such a code is also referred to as V2V code in this text. In order to construct a V2V code, a set of letter sequences with a variable number of letters is selected and a codeword is associated with each of these letter sequences. The set of letter sequences has to be chosen in a way that each message can be represented by a concatenation of the selected letter sequences. An exception is the end of a message, for which the same concepts as for block Huffman codes (see above) can be used.

Fig. 3.3 Example for an M-ary tree representing sequences of a variable number of letters,

of the alphabet  = {a0,a1,a2}, with an associated variable length code.

= {a0,a1,a2}, with an associated variable length code.

Similarly as for binary codes, the set of letter sequences can be

represented by an M-ary tree as depicted in Fig. 3.3. In contrast to binary

code trees, each node has up to M descendants and each branch is labeled

with a letter of the M-ary alphabet  = {a0,a1,

= {a0,a1, ,aM-1}. All branches

that depart from a particular node are labeled with different letters. The

letter sequence that is represented by a particular node is given by a

concatenation of the branch labels from the root node to the particular

node. An M-ary tree is said to be a full tree if each node is either a leaf

node or has exactly M descendants.

,aM-1}. All branches

that depart from a particular node are labeled with different letters. The

letter sequence that is represented by a particular node is given by a

concatenation of the branch labels from the root node to the particular

node. An M-ary tree is said to be a full tree if each node is either a leaf

node or has exactly M descendants.

We constrain our considerations to full M-ary trees for which all leaf nodes and only the leaf nodes are associated with codewords. This restriction yields a V2V code that fulfills the necessary condition stated above and has additionally the following useful properties:

The first property implies that any message can only be represented by a single sequence of codewords. The only exception is that, if the last symbols of a message do not represent a letter sequence that is associated with a codeword, one of multiple codewords can be selected as discussed above.

Let N denote the number of leaf nodes in a full M-ary tree

denote the number of leaf nodes in a full M-ary tree  . Each

leaf node

. Each

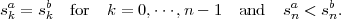

leaf node  k represents a sequence ak = {a0k,a1k,

k represents a sequence ak = {a0k,a1k, ,aNk-1k} of Nk

alphabet letters. The associated probability p(

,aNk-1k} of Nk

alphabet letters. The associated probability p( k) for coding a symbol

sequence {Sn,

k) for coding a symbol

sequence {Sn, ,Sn+Nk-1} is given by

,Sn+Nk-1} is given by

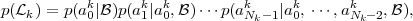

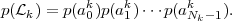

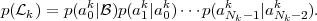

| (3.29) |

where  represents the event that the preceding symbols {S0,…,Sn-1} were

coded using a sequence of complete codewords of the V2V tree. The term

p(am|a0,

represents the event that the preceding symbols {S0,…,Sn-1} were

coded using a sequence of complete codewords of the V2V tree. The term

p(am|a0, ,am-1,

,am-1, ) denotes the conditional pmf for a random variable

Sn+m given the random variables Sn to Sn+m-1 and the event

) denotes the conditional pmf for a random variable

Sn+m given the random variables Sn to Sn+m-1 and the event  .

For iid sources, the probability p(

.

For iid sources, the probability p( k) for a leaf node

k) for a leaf node  k simplifies

to

k simplifies

to

| (3.30) |

For stationary Markov sources, the probabilities p( k) are given

by

k) are given

by

| (3.31) |

The conditional pmfs p(am|a0, ,am-1,

,am-1, ) are given by the structure of

the M-ary tree

) are given by the structure of

the M-ary tree  and the conditional pmfs p(am|a0,

and the conditional pmfs p(am|a0, ,am-1) for the

random variables Sn+m assuming the preceding random variables Sn to

Sn+m-1.

,am-1) for the

random variables Sn+m assuming the preceding random variables Sn to

Sn+m-1.

As an example, we show how the pmf p(a| ) = P(Sn = a|

) = P(Sn = a| ) that is

conditioned on the event

) that is

conditioned on the event  can be determined for Markov sources. In this

case, the probability p(am|

can be determined for Markov sources. In this

case, the probability p(am| ) = P(Sn = am|

) = P(Sn = am| ) that a codeword is assigned

to a letter sequence that starts with a particular letter am of the alphabet

) that a codeword is assigned

to a letter sequence that starts with a particular letter am of the alphabet

= {a0,a1,

= {a0,a1, ,aM-1} is given by

,aM-1} is given by

| (3.32) |

These M equations form a homogeneous linear equation system that has

one set of non-trivial solutions p(a| ) = κ ⋅{x0,x1,

) = κ ⋅{x0,x1, ,xM-1}. The scale

factor κ and thus the pmf p(a|

,xM-1}. The scale

factor κ and thus the pmf p(a| ) can be uniquely determined by using the

constraint ∑

m=0M-1p(am|

) can be uniquely determined by using the

constraint ∑

m=0M-1p(am| ) = 1.

) = 1.

After the conditional pmfs p(am|a0, ,am-1,

,am-1, ) have been determined,

the pmf p(

) have been determined,

the pmf p( ) for the leaf nodes can be calculated. An optimal prefix code

for the selected set of letter sequences, which is represented by the leaf

nodes of a full M-ary tree

) for the leaf nodes can be calculated. An optimal prefix code

for the selected set of letter sequences, which is represented by the leaf

nodes of a full M-ary tree  , can be designed using the Huffman algorithm

for the pmf p(

, can be designed using the Huffman algorithm

for the pmf p( ). Each leaf node

). Each leaf node  k is associated with a codeword of ℓk

bits. The average codeword length per symbol is given by the ratio of the

average codeword length per letter sequence and the average number of

letters per letter sequence,

k is associated with a codeword of ℓk

bits. The average codeword length per symbol is given by the ratio of the

average codeword length per letter sequence and the average number of

letters per letter sequence,

| (3.33) |

For selecting the set of letter sequences or the full M-ary tree  , we

assume that the set of applicable V2V codes for an application is given by

parameters such as the maximum number of codewords (number of leaf

nodes). Given such a finite set of full M-ary trees, we can select the full

M-ary tree

, we

assume that the set of applicable V2V codes for an application is given by

parameters such as the maximum number of codewords (number of leaf

nodes). Given such a finite set of full M-ary trees, we can select the full

M-ary tree  , for which the Huffman code yields the smallest average

codeword length per symbol .

, for which the Huffman code yields the smallest average

codeword length per symbol .

| ak | p(  k) k) | codewords

| |

| a0a0 | 0.5799 | 1 | |

| a0a1 | 0.0322 | 00001 | |

| a0a2 | 0.0322 | 00010 | |

| a1a0 | 0.0277 | 00011 | |

| a1a1a0 | 0.0222 | 000001 | |

| a1a1a1 | 0.1183 | 001 | |

| a1a1a2 | 0.0074 | 0000000 | |

| a1a2 | 0.0093 | 0000001 | |

| (a) | a2 | 0.1708 | 01 |

N | ||

| 5 | 1.1784 | |

| 7 | 1.0551 | |

| 9 | 1.0049 | |

| 11 | 0.9733 | |

| 13 | 0.9412 | |

| 15 | 0.9293 | |

| 17 | 0.9074 | |

| 19 | 0.8980 | |

| (b) | 21 | 0.8891 |

= 9 codewords; (b) Average codeword lengths depending on the number of

codewords N

= 9 codewords; (b) Average codeword lengths depending on the number of

codewords N .

.

As an example for the design of a V2V Huffman code, we again consider the stationary discrete Markov source specified in Table 3.2. Table 3.5(a) shows a V2V code that minimizes the average codeword length per symbol among all V2V codes with up to 9 codewords. The average codeword length is 1.0049 bit per symbol, which is about 0.4% smaller than the average codeword length for the block Huffman code with the same number of codewords. As indicated in Table 3.5(b), when increasing the number of codewords, the average codeword length for V2V codes usually decreases faster as for block Huffman codes. The V2V code with 17 codewords has already an average codeword length that is smaller than that of the block Huffman code with 27 codewords.

An application example of V2V codes is the run-level coding of transform coefficients in MPEG-2 Video [39]. An often used variation of V2V codes is called run-length coding. In run-length coding, the number of successive occurrences of a particular alphabet letter, referred to as run, is transmitted using a variable-length code. In some applications, only runs for the most probable alphabet letter (including runs equal to 0) are transmitted and are always followed by a codeword for one of the remaining alphabet letters. In other applications, the codeword for a run is followed by a codeword specifying the alphabet letter, or vice versa. V2V codes are particularly attractive for binary iid sources. As we will show in sec. 3.5, a universal lossless source coding concept can be designed using V2V codes for binary iid sources in connection with the concepts of binarization and probability interval partitioning.

3.4 Elias Coding and Arithmetic Coding

Huffman codes achieve the minimum average codeword length among all uniquely decodable codes that assign a separate codeword to each element of a given set of alphabet letters or letter sequences. However, if the pmf for a symbol alphabet contains a probability mass that is close to one, a Huffman code with an average codeword length close to the entropy rate can only be constructed if a large number of symbols is coded jointly. Such a block Huffman code does however require a huge codeword table and is thus impractical for real applications. Additionally, a Huffman code for fixed- or variable-length vectors is not applicable or at least very inefficient for symbol sequences in which symbols with different alphabets and pmfs are irregularly interleaved, as it is often found in image and video coding applications, where the order of symbols is determined by a sophisticated syntax.

Furthermore, the adaptation of Huffman codes to sources with unknown or varying statistical properties is usually considered as too complex for real-time applications. It is desirable to develop a code construction method that is capable of achieving an average codeword length close to the entropy rate, but also provides a simple mechanism for dealing with nonstationary sources and is characterized by a complexity that increases linearly with the number of coded symbols.

The popular method of arithmetic coding provides these properties. The initial idea is attributed to P. Elias (as reported in [1]) and is also referred to as Elias coding. The first practical arithmetic coding schemes have been published by Pasco [61] and Rissanen [63]. In the following, we first present the basic concept of Elias coding and continue with highlighting some aspects of practical implementations. For further details, the interested reader is referred to [78], [58] and [65].

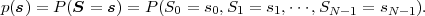

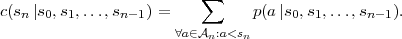

We consider the coding of symbol sequences s = {s0,s1,…,sN-1} that

represent realizations of a sequence of discrete random variables

S = {S0,S1,…,SN-1}. The number N of symbols is assumed to be

known to both encoder and decoder. Each random variable Sn can be

characterized by a distinct Mn-ary alphabet  n. The statistical properties

of the sequence of random variables S are completely described by the joint

pmf

n. The statistical properties

of the sequence of random variables S are completely described by the joint

pmf

A symbol sequence sa = {s0a,s1a, ,sN-1a} is considered to be less than

another symbol sequence sb = {s0b,s1b,

,sN-1a} is considered to be less than

another symbol sequence sb = {s0b,s1b, ,sN-1b} if and only if there

exists an integer n, with 0 ≤ n ≤ N - 1, so that

,sN-1b} if and only if there

exists an integer n, with 0 ≤ n ≤ N - 1, so that

| (3.34) |

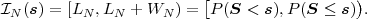

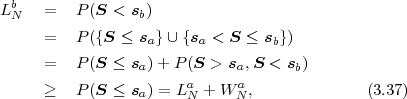

Using this definition, the probability mass of a particular symbol sequence s can written as

| (3.35) |

This expression indicates that a symbol sequence s can be represented by

an interval  N between two successive values of the cumulative

probability mass function P(S ≤ s). The corresponding mapping of

a symbol sequence s to a half-open interval

N between two successive values of the cumulative

probability mass function P(S ≤ s). The corresponding mapping of

a symbol sequence s to a half-open interval  N ⊂ [0,1) is given

by

N ⊂ [0,1) is given

by

| (3.36) |

The interval width WN is equal to the probability P(S = s) of the

associated symbol sequence s. In addition, the intervals for different

realizations of the random vector S are always disjoint. This can be shown

by considering two symbol sequences sa and sb, with sa < sb. The lower

interval boundary LNb of the interval  N(sb),

N(sb),

N(sa). Consequently, an N-symbol sequence s can be

uniquely represented by any real number v ∈

N(sa). Consequently, an N-symbol sequence s can be

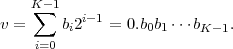

uniquely represented by any real number v ∈ N, which can be written as

binary fraction with K bits after the binary point,

N, which can be written as

binary fraction with K bits after the binary point,

| (3.38) |

In order to identify the symbol sequence s we only need to transmit the bit

sequence b = {b0,b1, ,bK-1}. The Elias code for the sequence of random

variables S is given by the assignment of bit sequences b to the N-symbol

sequences s.

,bK-1}. The Elias code for the sequence of random

variables S is given by the assignment of bit sequences b to the N-symbol

sequences s.

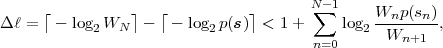

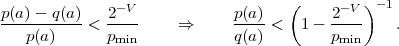

For obtaining codewords that are as short as possible, we should choose the real numbers v that can be represented with the minimum amount of bits. The distance between successive binary fractions with K bits after the binary point is 2-K. In order to guarantee that any binary fraction with K bits after the decimal point falls in an interval of size WN, we need K ≥-log 2WN bits. Consequently, we choose

| (3.39) |

where ⌈x⌉ represents the smallest integer greater than or equal to x. The binary fraction v, and thus the bit sequence b, is determined by

| (3.40) |

An application of the inequalities ⌈x⌉≥ x and ⌈x⌉ < x + 1 to (3.40) and (3.39) yields

| (3.41) |

which proves that the selected binary fraction v always lies inside the

interval  N. The Elias code obtained by choosing K = ⌈-log 2WN⌉

associates each N-symbol sequence s with a distinct codeword b.

N. The Elias code obtained by choosing K = ⌈-log 2WN⌉

associates each N-symbol sequence s with a distinct codeword b.

An important property of the Elias code is that the codewords can be

iteratively constructed. For deriving the iteration rules, we consider

sub-sequences s(n) = {s0,s1, ,sn-1} that consist of the first n symbols, with

1 ≤ n ≤ N, of the symbol sequence s. Each of these sub-sequences s(n) can

be treated in the same way as the symbol sequence s. Given the interval

width Wn for the sub-sequence s(n) = {s0,s1,…,sn-1}, the interval

width Wn+1 for the sub-sequence s(n+1) = {s(n),sn} can be derived by

,sn-1} that consist of the first n symbols, with

1 ≤ n ≤ N, of the symbol sequence s. Each of these sub-sequences s(n) can

be treated in the same way as the symbol sequence s. Given the interval

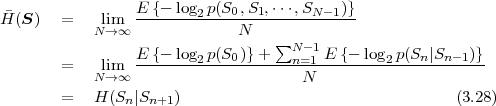

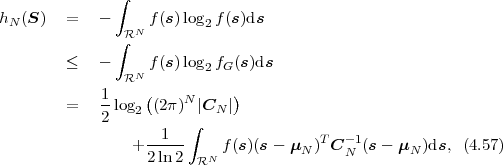

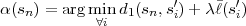

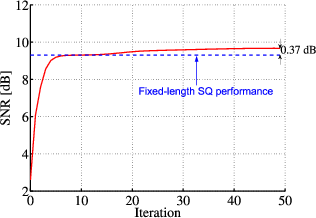

width Wn for the sub-sequence s(n) = {s0,s1,…,sn-1}, the interval